It’s been about a year since OpenAI unleashed ChatGPT, astounding the world and sending companies scrambling to capitalize on its advanced natural language processing capabilities.

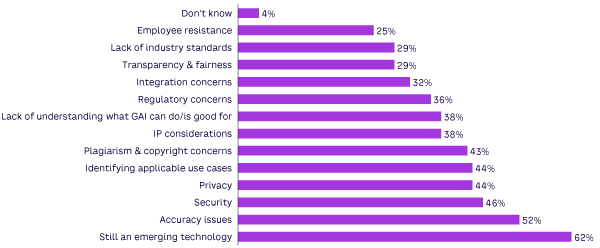

Today, the “honeymoon” for generative AI (GenAI) is nearing its end. Organizations realize that although very promising, adopting the technology comes with a number of issues. Some are particular to GenAI; others are variations on longstanding considerations associated with adopting almost any new information technology. In a recent Cutter survey, we asked organizations about the primary challenges that are hindering them from carrying out their GenAI plans (see Figure 1). This Advisor presents some of their major concerns.

It’s Still an Emerging Technology

Organizations stress that the technology and the market are confusing and trying to stay apprised of available GenAI products and their capabilities is difficult because it’s such a rapidly moving target. Consequently, selecting which products to adopt is frustrating. As one survey respondent noted, “Because the technology is so new, it is not apparent what all of the opportunities and risks are with [GenAI].”

In addition to new offerings appearing constantly, vendor viability is concerning due to frequently changing product functionality and features. For example, OpenAI’s recent update to ChatGPT basically made a lot of third-party vendors’ GenAI offerings (especially writing assistants) — many of which are built on top of ChatGPT via the OpenAI API — pretty much obsolete because a lot of the functionality they offered as a value add-on has now been incorporated within ChatGPT itself.

Accuracy of Content

Accuracy of content generated by GenAI tools remains a concern and is especially the case with large language models (LLMs) trained on general content (e.g., ChatGPT). Currently, the technology is not reliable enough to be utilized without human supervision when it comes to applying its output in many enterprise scenarios, thus limiting its value (e.g., for customer self-service applications).

In addition to hallucinations, survey respondents indicated that even when GenAI tools do provide references to key points made in their output, the references can contain errors. I use ChatGPT or Bing Chat daily, and I frequently encounter references to sources that have nothing to do with the points made in these tools’ output.

The development of commercial domain-specific LLMs trained on carefully sourced enterprise data and tailored to specific applications and industries should help mitigate accuracy issues. Enterprise providers like IBM, SAP, Oracle, Microsoft, and various start-ups are all developing such models.

Security & Privacy of Customer & Other Sensitive Data

These concerns are hardly particular to GenAI adoption, but GenAI does put its own particular spin on these familiar issues. A lot of the worry expressed by business leaders has to do with the nature of GenAI tools, which basically provide a very friendly, easy-to-use natural language interface. As one survey respondent noted, “It’s important to keep in mind that what you are doing is bringing someone else’s software into your organization and exposing its (your organization’s) innermost workings.”

Despite such concerns, additional findings from our survey indicate that organizations are rapidly moving to ensure security and privacy with their use of GenAI technology.

Identifying Applicable Use Cases

There also appears to be a lack of understanding among organizations as to just what GenAI can do or is good for within the context of an enterprise setting. In short, survey respondents indicated that they are still struggling to identify applications that are relevant, practical, and safe to implement for their particular business and industry.

Plagiarism, Copyright & IP Issues

There are a number of lawsuits related to copyright and intellectual property (IP) infringement with GenAI tools. But, to the best of my knowledge, the plaintiffs in these cases (at least so far) are targeting the vendors marketing GenAI products as opposed to end-user organizations. Google, Midjourney, Stable Diffusion, and OpenAI are all facing lawsuits of this nature.

To address such concerns, some vendors now offer indemnity to their users if they are sued for copyright violation stemming from their use of GenAI tools. Adobe, Google, Microsoft, and Shutterstock have all announced indemnification coverage (to varying degrees) to their users. This is a growing trend among GenAI tool providers. However, it remains to be seen how such indemnification programs actually play out in court. Consequently, plagiarism, copyright, and IP issues around GenAI tools are expected to continue to cause some organizations to pause or scale back their GenAI adoption efforts.

Regulatory Issues

The problem with GenAI and regulatory compliance is that the regulations governing AI use in general are all over the map or are still being worked out for their respective jurisdictions. The widespread popularity of GenAI has only exacerbated the problem. Surveyed organizations indicated that this uncertainty adds a considerable level of confusion and uneasiness to their efforts to comply with local and international regulations governing the use of the technology.

Integration Concerns

Integration concerns around data and with incorporating GenAI technologies into existing infrastructure and processes was cited by survey respondents as a significant problem. They caution that frequent updates to GenAI vendors’ tool functionality can lead to unpredictable results in the downstream processes and other software that such tools are integrated with.

Transparency & Fairness

The ability to explain the reasoning behind a decision or other output and avoid bias when generating outcomes with GenAI remain hot-button issues. Concerns range from needing to prevent GenAI from being used to create deepfakes, fake news, and other misinformation to perpetuating biases that can lead to discrimination.

Given the widespread coverage in the media, some might be surprised to see transparency and fairness rank so far down the list of issues organizations say are challenging their GenAI adoption efforts. However, additional findings in our survey indicate that organizations are indeed taking steps to ensure that they use GenAI in ethical and responsible ways.

Lack of Industry Standards

Organizations are concerned that a lack of interoperability and poor compatibility among different vendors’ products could limit their ability to share data and information among various GenAI tools and other applications. They also worry that a lack of industry standards could hinder their ability to guarantee the quality, reliability, and safety of their GenAI applications’ output, which could negatively impact compliance efforts and end-user confidence.

Employee Resistance

Employee fear of getting automated out of their jobs ranks last among issues impeding organizations’ GenAI efforts. This finding is hardly surprising because employees are typically very keen on using GenAI technology in their jobs — even without management approval.

Finally, I’d like to get your opinion on the status of GenAI in the enterprise now that organizations have been living with the technology for a year. I’d especially like to get your take on any issues and concerns you see as challenging to enterprise GenAI adoption efforts. As always, your comments will be held in strict confidence. You can email me at experts@cutter.com or call +1 510 356 7299 with your comments.

[For more on GenAI, see: “Generative AI in the Enterprise: Status, Practices & Trends” and “Generative AI: A Conversation with the Future.”]