CUTTER BUSINESS TECHNOLOGY JOURNAL VOL. 34, NO. 5

Paul Clermont dives straight into the three overarching issues related to AI. The first is unintended consequences like erosion of human skills and the scope expansion that takes us from reconnecting with old friends online to channels that broadcast "un-fact-checked 'news.'" The second is unintended bias, especially for systems that could have life-changing consequences. The third is privacy. Clermont offers no-nonsense advice for dealing with these issues, advocating for laws that make organizations responsible for the algorithms they use (whether bought or built) and prohibit unexplainable AI in applications that could harm people physically or affect their lives in significant ways.

News stories and opinions about artificial intelligence (AI) are everywhere — from articles and podcasts to TED talks, think tank symposia, and philosophers’ musings. Some enthusiastically tout AI’s benefits for workers, enterprises, and society overall; others paint dystopian pictures of intrusive governments and employers surveilling and micromanaging our lives. Many foretell increased unemployment and greater disparities in income and wealth between educated elites and a proletariat consigned to miserable, low-paying jobs not yet taken by robots. Some envision AI morphing into artificial natural intelligence indistinguishable from our own but able to grow into a superintelligence that can determine our fate just like HAL 9000,1 the computer in Stanley Kubrick’s 1968 science fiction film, 2001: A Space Odyssey. Scoffers — there are some — think AI is just the latest overhyped technology fad. All are a bit right and at least a little wrong.

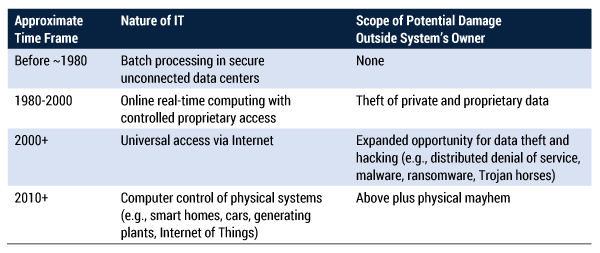

The purpose of this article is to find the space between the positive and the negative. Like any technology, AI can be used for good purposes and bad. It can be overused and misused, and it will be. The job at hand is to determine how specific dangers can be recognized and what might be required to avert them. Of course AI presents risks, but they are not entirely new. As computer applications have become dramatically more complex and interconnected, the risks posed to people by IT have grown exponentially over the years even without AI (see Table 1).

This article contends that the broadening scope of potential damage and the increasing speed with which it can happen mean innovations based on AI can no longer simply be what a corporation brings to the marketplace. Just as cities had to develop building codes to reduce fires, pestilences, and issues related to shoddy construction, so, too, must humans develop standards aimed at keeping IT a force for good (or at least not for ill). Too much technology has already been unleashed that’s of dubious benefit or outright harm.

There is no question whether AI applications will proliferate. They will. It’s when we get down to just-because-we-can-doesn’t-mean-we-should arguments that things get interesting. Unlike simpler forms of IT, questions of what, why, and how around AI initiatives will not necessarily be well enough addressed by technologists and managers. There are roles for sociologists, psychologists, behavioral economists, ethicists, and even historians.

In this article, we address three overarching issues for AI aside from technology: unintended consequences, unintended bias, and privacy.

Overarching Issue #1: Unintended Consequences

In theory, there are good unintended consequences, but it’s human nature to claim they were intentional if they happened, so not-so-good unintended consequences are of primary interest. These include the initiative itself, its (sometimes) logical extensions, and its implementation. The biggest challenge is to think far enough ahead to recognize their possibility. Here are some unintended consequences (and examples) to consider:

-

Collateral damage from an otherwise good idea

-

The Internet plus social networks and blogging and publishing sites make it easy for ordinary people to make their voices heard. They also make it possible for mischief makers, cranks, and conspiracy theorists to fill cyberspace with the misinformation, disinformation, and outright lies that have contributed greatly to political polarization.

-

-

Radical and questionable expansion of scope of a good idea once a base of users is established

-

Social networks that made it easier for us to find and reconnect with old friends morphed into a channel for microtargeted advertising and broadcast of un-fact-checked “news.”

-

-

Poor machine learning (ML) performance due to inadequate training

-

When the scope of training cases was overly narrow, face recognition and skin lesion evaluation did poorly on dark skin.

-

If the range of possibilities is constricted to what has happened in the last 50 years, phenomena like 100-year floods will never be identified as possibilities.2

-

-

Overconfidence in sensors and logic

-

A vehicle on “autopilot” is faked out by a white-painted truck trailer that essentially disappeared in bright sunlight, and the driver was killed in the crash.

-

A slightly modified 35-mph speed limit sign is misread as 85, and the car takes off without noting the context (a winding country road). Never mind that there may be no place in the US with an 85-mph limit!

-

-

Poorly designed human interface for dealing with emergencies

-

Impaired sensors on two Boeing 737 MAX 8 planes led to crashes when the autopilot seized control of the plane and took inappropriate “corrective” action that cockpit crews were unable to override.

-

-

Culture-driven failure to think through what could go wrong

-

An ethic of “moving fast and breaking things” does not foster hard critical thinking but succeeds admirably at breaking things.

-

A rah-rah, high-fiving culture spawns groupthink, sidelining the devil’s advocates who ask the tough questions that in retrospect should have been asked and answered.

-

-

Erosion of human skills

-

Marine navigation by charts, compass, and dead reckoning is becoming a lost skill as GPS proliferates. What if the electronic connection is lost?

-

Terrestrial navigation by map and landmark are deteriorating for the same reason.3

-

The intuitive sense based on experience that something is not quite right — before automatic alarms go off in a refinery.

-

A methodical approach to thinking through unintended consequences must include getting explicit not just about what we want to have happen, but what we do not want, and how success in the former could risk including too much of the latter. It may also be useful to seek help from disciplines not usually associated with IT initiatives, such as behavioral economists and psychologists.

Overarching Issue #2: Unintended Bias

Biases leading to unethical (if not unlawful) discrimination in algorithmic decision making have arisen as a major concern in areas like loan applications, hiring, and criminal justice. In theory, an algorithm won’t know the color of your skin without your picture, or your accent without a voice recording, so it will deal only in relevant facts with complete objectivity. If only it were that simple.

Humans, including AI designers, have biases. We’re products of the various people and cultures we’ve encountered, all of which have contributed biases. Thus, bias too easily creeps in despite our best efforts to eliminate it. As a very simple example, using past success patterns to train AI in evaluating job candidates bakes in all the biases that resulted in those patterns. In general, AI “training” approaches that use a phenomenon that reflects past human decisions as a model of excellence will perpetuate all the biases that underlay those decisions.

Some kind of oversight is needed way beyond the normal skills and perspectives of technologists and professional managers. A wise organization embarking on a major AI initiative will manage appearances as well as substance. An oversight board should include people with a variety of perspectives, including sociologists, historians, psychologists, lawyers, and maybe even community organizers.

It is very hard to define and recognize bias. Helpful criteria like the four-fifths rule4 may “prove” lack of bias along a single dimension like race or gender but could miss intersectional disadvantage for non-white women.

The auditing model for financial reporting would make sense if there were a bias-recognizing equivalent of Generally Accepted Accounting Principles (GAAP)5 to refer to, plus a certification process for practitioners. Absent that, a self-described “bias auditor” may not be reliable; the incentives are wrong versus those of a licensed third party like a CPA or a government agency with a charter like the US Securities and Exchange Commission (SEC). Some kind of GAAP equivalent will surely evolve to provide a template for bias “accounting” just as GAAP did for financial accounting. Like GAAP, it will provide a certain amount of latitude, but adherence to it, absent falsification of data, will provide some immunity against legal action. New careers will emerge to define and practice it and monitor adherence, just as with accountancy.

Even an algorithm reasonably believed to be unbiased should not be the final authority in every case, a notion that extends to potentially life-changing decisions for individuals and their families like home and business loans, hiring, college admissions, and elements of the criminal justice system like bail-setting, sentencing, and parole decisions. By definition, an algorithm yields a number that is compared to a boundary criterion. No numbering system that attempts to quantify things that are not precisely measurable is good enough to decide when the algorithmic result is close to the boundary. In that situation, basic fairness and common sense call for human intervention. Could that introduce bias? Yes, but a decision that could change the life of a person and family should not be made on the equivalent of a coin toss.6 Human judgment should always have a place in such situations

Overarching Issue #3: Privacy

Privacy issues are not unique to AI, but AI’s hunger for data for ML training has upped the threat. This was brought home by the recent enforcement actions taken by the US Federal Trade Commission (FTC) for misuse of facial recognition technology by Everalbum, which scraped millions of people’s pictures and used them without the consent of the pictured for commercial purposes.7 It will be interesting to see the implications of this on current and future development of AI technologies and applications: will it hinder innovation?

Shortcuts like Everalbum’s that take advantage of loopholes and ambiguities in existing law will be prohibited eventually, some by legislation, some by judicial opinions. Innovation will be slowed but so is innovation in medical science slowed by laws governing ethically permissible protocols for human tests and experiments. As a general principle, ethics trump expediency, but specific situations may call for more nuanced attention to risk and transparency.

Internet trolling is an increasing problem, made worse by AI’s ability to create seemingly authentic deepfake photos of scenes that never happened or recordings of words never spoken. Their capacity for mischief requires no elaboration.

Dealing with the Issues

As the ability of digital and IT to create harm grows, so must the legal and regulatory infrastructure to prevent, or at least minimize, disasters and assign liability when they happen. The law always lags technology, and this is as it should be — though not by too much. The EU instituted its General Data Protection Requirements in 2018, largely copied by the US state of California in 2020; when two such sizable chunks of the world or the US market act, it becomes a de facto standard.8 The EU also recently proposed new laws to govern AI, ensuring oversight and limitations in the interest of public safety and privacy (see sidebar). Although restrictions like this are new for IT, they’re established practice elsewhere, including:

-

Building codes

-

Requirements for approval by licensed professional engineers

-

Periodic auto safety inspections

-

Underwriters Laboratories (UL) certification of electrical products

-

US Federal Aviation Administration (FAA) and US Federal Drug Administration (FDA) certifications for safety of air travel, food, and pharmaceuticals

The EU Gets into the Act

As this article was being written, the EU put forth a framework for regulating AI that could become a de facto standard. As with the GDPR, it fills a gap that was unlikely to be filled in the near term by others. It recognizes that different forms of AI pose different levels of risk:1

- Low-risk AI only requires transparency. For example, a deepfake photograph would have to be labeled as such. Otherwise, it would be essentially unregulated.

- High-risk AI, which includes most of the examples cited in this article, would require not just transparency but thorough risk assessment, user education, and adequate human control before being brought to market.

- Unacceptable-risk AI, such as applications to manipulate public opinion or develop social credit scores (as in China), would be prohibited.

1Marcia, Valeria, and Kevin C. Desouza. “The EU Path Towards Regulation on Artificial Intelligence.” The Brookings Institution, 26 April 2021.

There should be standards for policies and practices when creating the software equivalent of guardrails, fire doors, containment vessels, and intrusion detectors. There need to be penalties when something goes wrong and those standards were not met. These may limit AI “creativity” (i.e., unorthodox, out-of-nowhere actions that just might work), but who knows? Such an action beat the Go champion, but Go is, well, just a game.

Legal principles need to be established, including:

-

Unexplainable AI does not belong in any application that could harm people physically or affect their lives in significant ways. The same applies to black-box algorithms sold by vendors that refuse to explain their “proprietary” workings. Buyers must receive explanation, acknowledge receipt, and possibly sign nondisclosure agreements.

-

Organizations are responsible for algorithms they use, whether bought or built. If bought, relief would require that algorithms don’t behave as advertised, similar to how an auto accident caused by a design or factory defect can relieve the driver of some responsibility.

Laws or regulations will also be required to:

-

Ensure the spirit of principles like those established in the US Bill of Rights is applied to government activities (e.g., requirements for warrants).

-

Limit the use of algorithms for close calls on potentially life-changing decisions such as home loans, business startup loans, hiring, and college admission.

-

Establish what constitutes a “legitimate interest” for third parties and governments to access data we have no choice but to generate about ourselves.

-

Obtain a better balance between freedom of speech and freedom from malicious misinformation and disinformation. AI can be highly useful here to improve fact-checking. Even more important, AI can be used to broaden people’s perspectives on events rather than how it is now used to narrow and intensify them, fostering political polarization.

-

Recognize that Internet communications services are not the same as voice telephone services because they can monitor content, and experience is showing they need to. The viral spread of absurd conspiracy theories and proliferation of lies and trolling are giving free speech a bad name.

Major emphasis on cybersecurity is not a matter of choice. There are more algorithms to steal, or worse, hack. There is more data to steal or pollute. Risks really grow with the Internet of Things:

-

We must carefully limit our trust in hackable AI to safely govern infrastructure like power grids, nuclear plants, hydroelectric dams, air and vehicle traffic control, and drinking water supplies.

-

We may need better firewalls to separate remote access control from the provision of information. For example, there’s a huge difference between downloading potentially hackable engine management software to a moving vehicle via the Internet versus downloading GPS information the driver needs in real time.

In short, just because we can do something that’s “cool” doesn’t mean we should.9

Picturing an AI-Enabled Future

The future can look quite good if governments and technology providers deal effectively with the overarching issues. Beyond that, the most tangible changes for many people will be in the workplace:

-

Use of robots and robotic process automation for repetitive and unambiguously describable tasks will increase wherever it’s economical. Where it’s not, people’s jobs will be made as robotized as possible by industrial engineers, as in Amazon fulfillment centers. (Taylor lives on!10) In the physical realm, some processes may be amenable to “cobots”: robots that work directly with a person to do parts of a job that are too finicky, strenuous, or dangerous for a human. In effect, the robot amplifies the person’s capability. In the office realm, we’re already seeing a trend toward customer service phone systems that use AI to determine from the customer’s words where to route the call if it can’t be addressed by the computer alone; in effect, the person is amplifying the capability of the machine.

-

Paraprofessional or lower-level professional tasks entailing a lot of search and pattern recognition will be taken over by AI, amplifying the person’s ability and productivity.

-

New jobs in AI design, monitoring, and ML training will offset some lost jobs.

-

A high degree of worker surveillance will be irresistible to employers unless it’s regulated.

-

We may at long last see questioning of the continuing appropriateness of the 40-hour workweek, the standard for almost a century after a century of rapid decline from 72 or 84 hours.

On the more sobering side, workplace prospects for the poorly educated continue to dim. Failure of governments to address “left behind” people and regions will lead to increasing political unrest with predictably negative consequences.

Conclusion

The risks to society in general from AI are real and important enough right now to demand attention from a lot of very talented, busy people. That said, we need to put the power of AI in perspective. As sophisticated and brilliant as some AI applications are, they do not come near artificial general intelligence (AGI). Consider three milestones.

Championship-level checkers playing by a computer dates back to 1962.11 It was based, like today’s AI, on ML (i.e., playing against itself a huge number of times, gradually getting better by learning through trial and error). It took 34 years for computers to advance to the level of grandmaster chess and another 20 to conquer Go. At their core, these feats, however brilliant, were the same: pursuit of a clearly defined goal with strict rules governing the moves you can make to get there. In short, AI applications to date are idiot savants, able to do a limited range of tasks with superhuman brilliance … and nothing else.

When we apply our human intelligence to addressing AI’s “right now” challenges, we can safely ignore the prospect of imminent AGI that could morph into superintelligence, whatever that is. Time and energy spent on risks that certainly will not materialize in the next decade or two (if ever) is not just a waste of time, it’s a counterproductive diversion.

Sufficient unto the day is the evil thereof.

— Sermon on the Mount, Matthew 6:3412

References

1See Wikipedia’s “HAL 9000.”

2Farnum Street. “The Lucretius Problem: How History Blinds Us.” Farnam Street Media, accessed May 2021.

3I’ve seen myself becoming increasingly dependent on GPS directions in the car, and not just for the first time or two I go there.

4Mondragon, Nathan. “What Is Adverse Impact? And Why Measuring It Matters.” HireVue, 25 March 2018.

5“Generally Accepted Accounting Principles (GAAP).” Investopedia, 22 February 2021.

6This stricture does not apply to non-life-changing decisions such as auto loans. If the algorithm thinks you can’t afford the Cadillac, a Chevrolet will still get you where you need to go.

7Art photographs often include random people. Usage is permitted for art but not for purely commercial purposes like advertising.

8Whatever its imperfections, it filled a void. Only the US had comparable void-filling power, but its political structure at the time had a strong bias toward inaction.

9Does my clothes dryer really need an IP address?

10Frederick Winslow Taylor was an efficiency expert from the early 20th century who turned time and motion study into a science.

11In the 1960s, the checkers problem was addressed by “brute force” techniques, evaluating a tree of all potential moves multiple generations in the future and choosing the one with the highest probability of a good outcome. By the 1980s, this had evolved to pattern recognition. All of these approaches were hindered by lack of sufficient computer processing power.

12See Wikipedia’s “Sufficient unto the day is the evil thereof.”